TRANSLATE

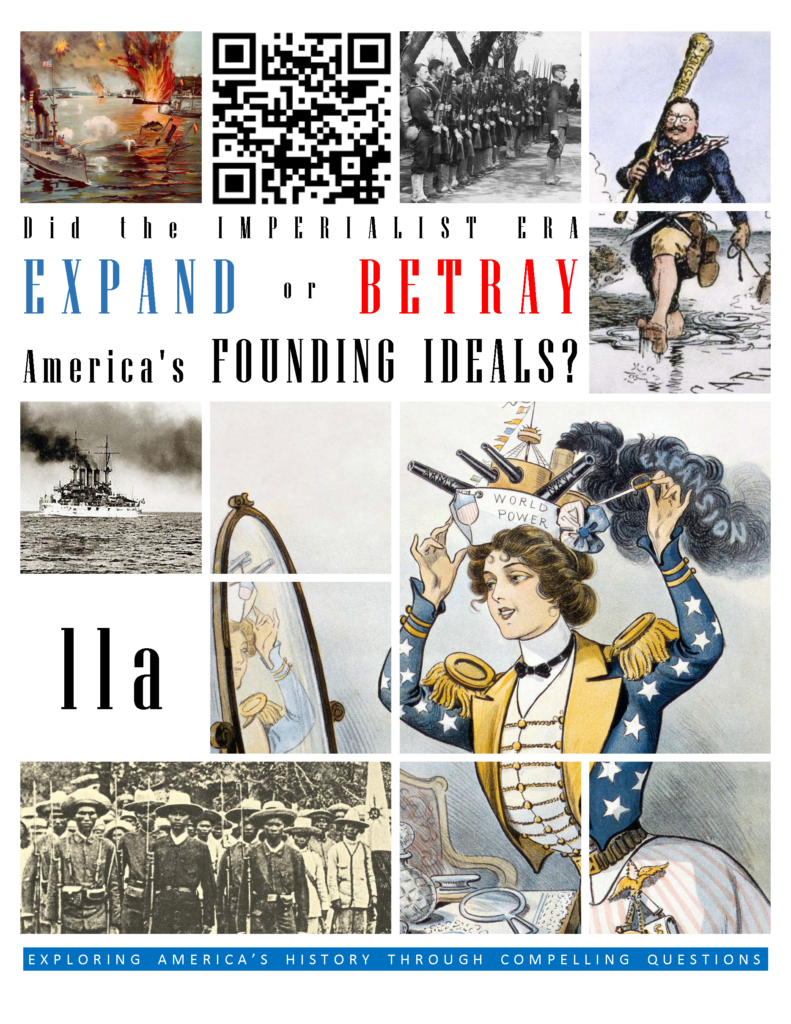

Since the early days of Jamestown colony, Americans stretching their boundaries to encompass more territory. When the United States was founded in 1776, the practice continued. The 1800s were spent defining the nation’s borders through negotiation and war and as the 20th Century dawned, many believed that the expansion should continue.

Different groups pushed for overseas expansion. Industrialists sought new markets for their products and sources for cheaper resources. Nationalists claimed that colonies were a hallmark of national prestige. The European powers had already claimed much of the globe. America would have to compete or perish. Missionaries continually preached to spread their messages of faith. Social Darwinists such as Josiah Strong believed that American civilization was superior to others and that it was an American’s duty to diffuse its benefits. Alfred Mahan wrote an influential thesis declaring that throughout history, those that controlled the seas controlled the world. Acquiring naval bases at strategic points around the world was imperative.

Before 1890, American lands consisted of little more than the contiguous states and Alaska. By 1920, America could boast a global empire. American Samoa and Hawaii were added in the 1890s by force. The Spanish-American War brought Guam, Puerto Rico, and the Philippines under the American flag. Through negotiation and intimidation, the United States secured the rights to build and operate an canal in Panama.

The country legitimately call itself an empire. But the transition was not without its critics. The American Anti-Imperialist League argued that the conquest of foreign lands betrayed America’s founding ideas. How could a nation founded on liberty, conquer distant nations such as the Philippines, deny the Filipinos the rights accorded to Americans, and still claim to carry to be a model of enlightened freedom for the world to follow? If the Americans could rise up against a king in 1776, why shouldn’t the Filipinos be equally justified in their rebellion against American rule?

To advocates for imperialism, the answer was clear. America, as a leader among nations, had an obligation to spread the message of freedom and democracy. Although the cost may be high, less developed, and less civilized nations needed the United States and the European powers to show the way. In the eyes of the imperialists, foreign intervention was a way to spread the ideals of the Founding Fathers. Imperialism was a positive good, not a betrayal.

What do you think? Did the Imperialist Era expand or betray America’s founding ideals?

CONTINUE READING