TRANSLATE

OTHER VERSIONS OF THIS READING

INTRODUCTION

For most of American history, major changes in daily life came from new machines: the railroad, electricity, cars, and airplanes. In the last 30 years, one invention has been just as important, even though you cannot see it the same way you can see a highway or a power line. The internet has reshaped the economy, entertainment, and politics. It has changed how people work, how families communicate, and how students learn. Many Americans now carry a powerful computer in their pocket and can get information instantly.

In many ways, the internet has expanded opportunities by making information, communication, and creativity cheaper and faster than ever before. It can help people learn new skills, find jobs, build communities, and participate in public debates in ways that were difficult in earlier eras.

But the internet has also created new problems that are still unfolding. It can spread misinformation quickly. It can expose private information. It can reward anger more than truth. And it can leave behind people and communities without good access. Some historians argue that the internet represents the greatest expansion of knowledge access in human history. Others argue it may be weakening democratic trust.

So, when historians look back on the past 30 years, they will almost certainly debate the same question we are asking now: Has the internet made America a better place?

THE ORIGINS OF THE INTERNET

The internet did not begin as a shopping mall, a streaming service, or a place for social media. It began as part of a Cold War struggle over power, technology, and security. As you know, fears of a nuclear strike were high in the early decades of the Cold War, and American leaders worried about communication in such a crisis. If the Soviet Union attacked a major command center like the White House or the Pentagon, could the United States still send messages and coordinate a response? Cold War fears encouraged leaders to support communication systems that could keep working even if parts were damaged or destroyed. In the late 1960s, the government and military began funding research that led to ARPANET, an early network that linked computers at universities and research centers. Historians generally agree that the first message sent over what became the internet was in 1969, when computers at UCLA and the Stanford Research Institute shared the message “LO”, short for “login.”

The key idea of ARPANET was packet switching, which is still key to the internet today. Packet switching meant breaking information into small pieces and sending them through different routes. If one route was blocked, the data could travel another way. Thus, the internet was more like a spider’s web than a straight road between two computers and thus fulfilled the Cold War need of finding a way to pass messages even if one point in the network had been destroyed.

It wasn’t just the military and government that was working on the early internet. Before the internet, scientists and researchers had to wait for books or journals to be published to find out what one another were working on. They wanted a quicker way to share their ideas and data. To achieve this, they linked computers through telephone lines and developed common rules for sending information from one computer network to another. These rules are called protocols, and the most important was TCP/IP. When many different networks followed the same rules, they could become one larger “network of networks.” In 1983, TCP/IP became the standard protocol for ARPANET, and many historians mark that moment as the true birth of the modern internet.

Notice that the internet was not suddenly born overnight, but rather was developed over time, and its inventors did not have today’s world of smartphones, apps, streaming video and social media in mind when they made it. All of these developments happened over time, and each was the response to a particular need people had at the moment.

THE INTERNET & THE INFORMATION AGE

As the internet developed, the American economy was also shifting in deeper ways. By the late 1900s, people were starting to talk about a new era in history: the Information Age. In earlier periods, the most important resources were often physical. In the Industrial Revolution, economic power came from factories, machines, railroads, oil, and mass production. In the Information Age, power increasingly comes from data, communication, and knowledge.

In other words, the internet mattered not only because it was a new technology, but also because it fit into a new kind of economy where information, communication, and speed became more valuable than ever.

This does not mean that factories and physical goods stopped mattering. Americans still need food, housing, and energy. But many of the fastest-growing industries began to focus on creating, organizing, selling, and controlling information. The Information Age has grown alongside globalization. In the 1970s and 1980s, many American companies moved manufacturing overseas to places where labor was cheaper. This weakened old industrial regions in the United States and helped create the Rust Belt. At the same time, the United States grew more dependent on jobs tied to technology, finance, education, media, and services.

However, the Information Age has never been evenly shared. Many countries and regions remain deeply tied to agriculture or factory production, and even within the United States, not all communities benefited equally from the shift. In some places, globalization and the Information Age brought new opportunities. In other places, these changes mean job loss, wage pressure, and a sense that the economy has changed too fast.

The shift to the Information Age has also increased the demand for higher education. In the early 1900s, the country went through a major change in which high school became increasingly important for working in a modern industrial economy and towns across the nation built high schools. After World War II, college became much more common, especially as returning veterans used the GI Bill to attend universities. Today, as more jobs are tied to information, technology, and specialized skills, some form of education or training beyond high school has become close to essential for people who want to work in the information sector.

HOME COMPUTERS & THE EARLY INTERNET

In the 1960s and 1970s, computers were mostly huge mainframes owned by universities, the military, and large corporations. Ordinary Americans rarely touched one directly. But in the late 1970s and early 1980s, personal computers began shrinking in size and price. Companies like Apple, IBM, and Commodore marketed computers for home and small business use. This was a major transition: computers were no longer just tools for experts in labs. They were becoming consumer products.

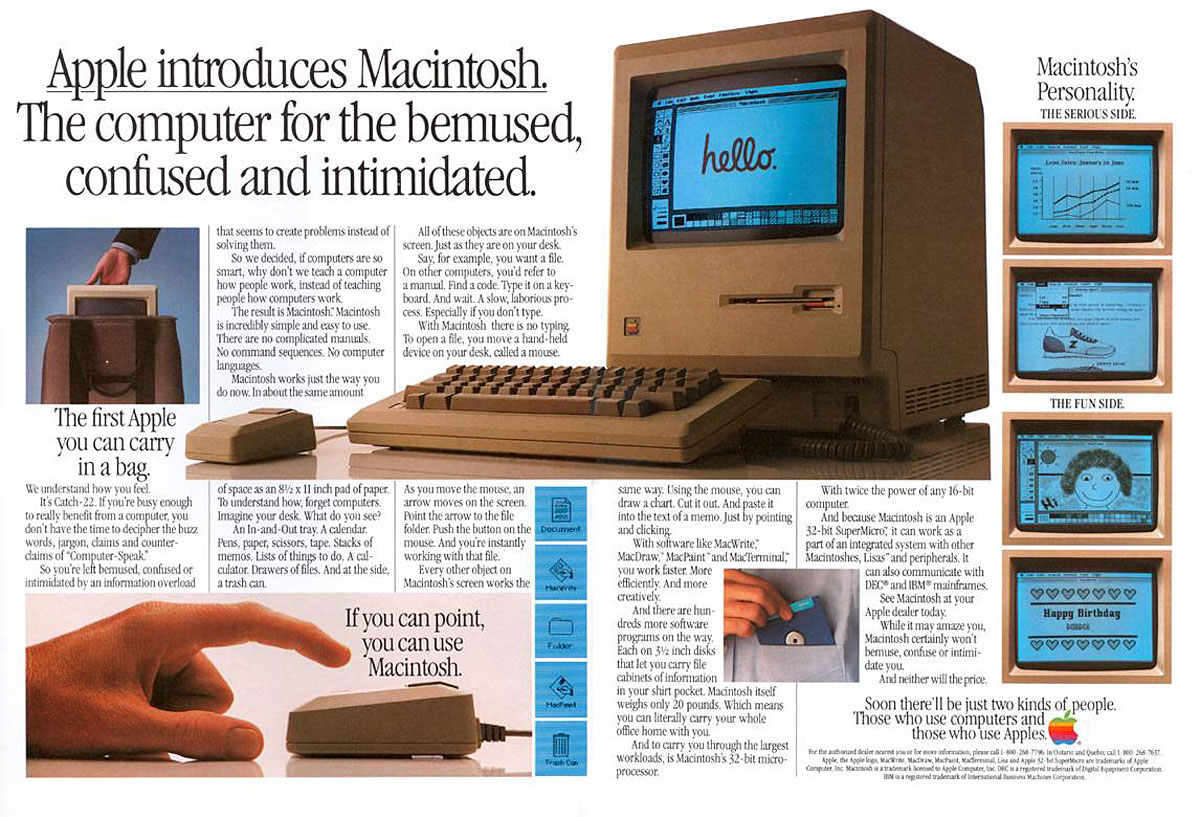

A key moment came in 1984, when Steve Jobs, the CEO of Apple, introduced the Macintosh, a computer designed for everyday users. Unlike earlier machines that required typing commands, the Macintosh used a graphical user interface (GUI) with windows, icons, and a mouse. That design made computers feel less intimidating and helped expand the audience beyond hobbyists.

Many Americans also encountered computers before they owned one at home. By the 1980s, offices increasingly used computers for payroll, spreadsheets, and word processing. Schools began adding computer labs, and students practiced typing, played educational games like Oregon Trail, and learned basic programming. This “workplace and classroom” exposure helped normalize computers and prepared families for the idea that a home computer could be useful, not just a luxury.

Primary Source: Advertisement

Primary Source: Advertisement

An ad for the first Macintosh emphasizing its user-friendly features, mouse, and graphical user interface featuring windows and icons.

But home computers and the internet are two different things. Most people who bought computers in the 1980s had no idea that they would one day be used to connect to the internet. A turning point came in the 1990s with the development of the World Wide Web (www), which made it easier for ordinary people to use the internet through web browsers. The distinction between the internet and the World Wide Web seems trivial today, but it mattered in history. The internet is the underlying infrastructure that connects computers together (developed mainly in the 1960s–1980s), while the World Wide Web is a layer on top of the internet that makes it easier for everyday users to click through pages.

What made the World Wide Web easier was simplicity. Earlier internet use often required typing commands and knowing exact addresses for services (for example, logging into a specific computer to access files). The World Wide Web introduced a system of web pages that could be connected by clickable hyperlinks, so users could move from one page to another without memorizing complicated commands. The Web also depended on web browsers, which turned the internet into something people could see and navigate. Early browsers like Mosaic and Netscape helped popularize the idea of “surfing” the web. Browsers made the internet feel more like reading and exploring than like programming. Over time, Chrome, Firefox, and Safari replaced the original browsers as the most popular options.

Primary Source: Movie Poster

Primary Source: Movie Poster

The movie You’ve Got Mail captured the spirit of the early internet when people were excited about email and the possibilities the internet promised.

Another key development that helped was the Domain Name System (DNS), which translates easy-to-remember names (like inquiryhistory.com) into the number-based IP addresses that computers use. While DNS was not invented specifically for the Web, it became even more important in the 1990s because it made it far easier for everyday users to find websites without needing to know strings of numbers.

Many families first got online at home using dial-up connections that ran through phone lines. Companies like America Online (AOL) provided internet connection service and software that helped make the early internet feel user-friendly. For many people, one of the first everyday uses of the internet was email, which made long-distance communication faster and cheaper than phone calls or letters. People also joined online chat forums and explored basic web pages. Even though the internet was still slow, it felt revolutionary.

Pop culture captured the excitement at the time in the 1998 romantic movie You’ve Got Mail, starring Tom Hanks and Meg Ryan. The plot of the story revolves around their daily ritual of arriving home and checking their email, eventually leading to love. Email is portrayed in the film as a small daily miracle, and the movie shows how online communication could create relationships that felt real, even between people who had never met face-to-face.

As the internet took off and Americans got used to going online (perhaps even finding love like Tom Hanks and Meg Ryan), money began flowing in. The result was the Dot-Com Bubble. Investors poured money into internet companies, even those with little profit, thinking that soon everything would be online and the key to getting rich was getting in early. Many of these start-up companies had no clear long-term revenue model, and some were valued more for hype and expectations than for real earnings. When they failed, which many did, the stock market dropped sharply in 2000–2001. This “dot-com crash” showed both the excitement and instability of the new digital economy.

A company that survived the dot-com crash was Google. In the mid-1990s, as the internet exploded, early tools struggled to keep up. Some, like Yahoo, relied partly on human-made directories like phone books, while others, like AltaVista, tried to search the web more directly. These search engines were experiments in a new problem: how to find information on the growing internet. In 1998, Stanford graduate students Larry Page and Sergey Brin launched Google, which became famous for ranking pages by relevance using links between sites as clues about trust and importance. The result was that Google usually produced faster, cleaner results, and Google became Americans’ go-to search engine. By the early 2000s, everyone knew what it meant “to google” something.

By the late 1990s, many families could get online, but the experience was still limited by dial-up. It was slow, it tied up the phone line, and people had to “log on” for a short session rather than staying connected all day. Then, broadband changed everything. Broadband connections were based on a separate, permanent connection which was faster and always on, making the internet feel less like an occasional activity and more like a basic utility as important as electricity and running water. This shift opened the door to bigger changes, like online shopping, streaming video, and online gaming. Since then, mobile access through smartphones has made the internet even more integrated into daily life.

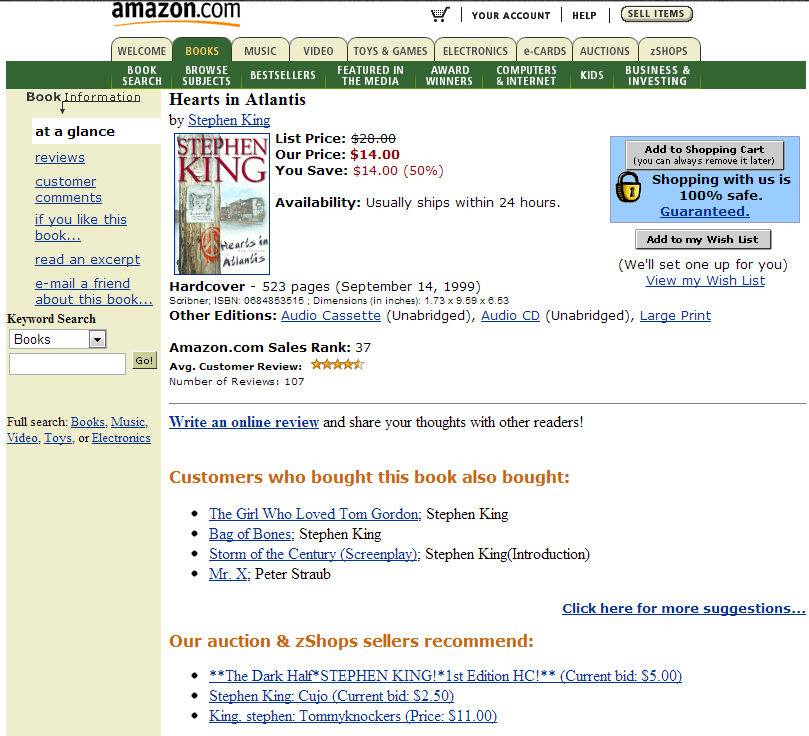

Primary Source: Screenshot

Primary Source: Screenshot

The Amazon website as it appeared in 2000 after the company had already begun branching out and selling more than just books.

THE GROWTH OF E-BUSINESS

As the economy became more focused on information and data, and broadband access made fast and reliable connections normal, the internet offered new ways to buy and sell.

Online shopping, also called e-commerce, grew rapidly. The most famous of all the American online stores, Amazon, was founded in 1994 as an online bookstore, but has grown to become a massive marketplace that can deliver almost anything.

As e-commerce expanded, traditional brick-and-mortar stores struggled. For decades, Americans had shopped at department stores like Sears, JCPenney, K-Mart, and Macy’s. They spent weekends in suburban shopping malls. In the 2000s and 2010s, these stores lost customers as online shopping became easier and cheaper, and shopping malls across the country closed. Brick-and-mortar stores that have survived usually have an online option for customers in addition to their physical locations.

It’s not just selling to individual consumers that has shaped the online economy. Business-to-business (B2B) sales have skyrocketed as well. No longer do offices stop at the local store for pens and paper. Now, they simply order in bulk online.

At the same time that new corporations like Amazon were killing off old ones like K-Mart, the internet has made it easier for small entrepreneurs to get started. People can sell used items on eBay. Artists and crafters can sell on Etsy. Payment tools like Square have helped small stores and individual vendors accept credit cards, even at impermanent locations like food trucks, farmers markets and craft fairs.

Banking has changed too. Services like PayPal and apps like Venmo made it normal to send money digitally. People can log into their bank’s app or website and quickly transfer money. Over time, the old habit of writing checks has become less common.

Many government services have also moved online. Citizens can now renew licenses, fill out forms, file taxes, and access public information on government websites.

Primary Source: Photograph

Primary Source: Photograph

Apps like Uber have created new opportunities for workers who want to participate in the gig economy.

Another major shift has been the gig economy. To make extra money, or instead of working at traditional jobs, thousands of Americans have begun doing short-term work that could not exist without the internet. Companies like Uber, Lyft, and DoorDash offer flexible work. The gig economy is not without its critics, however. Worries that gig workers lack benefits and job security are growing, and in some states the government has started to pass laws regulating this new corner of the economy.

ENTERTAINMENT GOES DIGITAL

Like the shift from vaudeville to movies that revolutionized entertainment in the 1920s, the information age has upended the entertainment industry at the start of the 2000s.

Video games started in arcades, where people played short games by inserting coins. In the late 1970s and 1980s, home consoles like Atari brought gaming into living rooms. In the 1980s and 1990s, companies like Nintendo and Sega helped turn console video games into a huge part of youth culture.

In the 1990s and 2000s, improvements in computer power and internet connections made online gaming common. Players can now compete or cooperate with people around the world. Later, handheld gaming on systems like the GameBoy and Nintendo Switch, as well as smartphone games became widespread.

Primary Source: Photograph

Primary Source: Photograph

Handheld games like this Leapster have replaced arcades and opened up a whole new category of entertainment.

Movies and TV also changed. In the past, families watched TV shows when the broadcasters played them, rented VHS tapes and DVDs from a brick-and-mortar store or went to movie theaters.

Over time, the internet helped push entertainment away from physical stores and scheduled programming and toward on-demand viewing at home. The big shift was streaming, which lets people watch instantly over the internet.

Netflix is the best example of this change. Netflix began in the late 1990s as a DVD-by-mail service but later shifted toward streaming. Streaming accelerated the decline of video rental stores. Blockbuster and Hollywood Video, chains of rental stores once found all over the country, filed for bankruptcy as streaming and mail-order rentals changed people’s habits.

Streaming also changed who could create entertainment. YouTube allowed anyone to post videos and build an audience without a TV studio.

CELL PHONES TO SMARTPHONES

The invention of the cell phone added a new layer to the internet revolution.

The idea behind cellular communication is almost as old as the telephone, but the first modern cell networks were not created until the technologies that make them possible were fully formed in the late 1970s and early 1980s. Japan launched one of the first commercial cellular networks in 1979, and in the United States, the first widely recognized commercial cell network (1G) began operating in 1983. The first cell phones were huge, and required batteries that filled car trunks, meaning cell phones were often called “car phones.” In the 1990s the major cell phone networks were able to build out coverage for most American cities and by 2000, cell phone stores were popping up around the country, cell phones were small enough to fit in pockets and owning a cell phone was becoming common.

Primary Source: Photograph

Primary Source: Photograph

Apple’s CEO Steve Jobs introducing the first iPhone on stage in 2007.

Then in 2007, Apple, led by Steve Jobs, released the iPhone, the first touchscreen smartphone and everything changed. Google and Samsung quickly copied the basic form of the iPhone and in 2008, Apple launched the App Store. By the early 2010s, the expansion of 4G networks helped make smartphones fast enough for video streaming, constant social media use, and always-on apps. The old days of cell phones were over, and with smartphones, almost anyone can carry a powerful computer in their pocket.

With smartphones and the app store, the app economy was born. Instead of using a few programs on a desktop computer, Americans began relying on hundreds of small apps for daily tasks. Today, people order food, track workouts, find dates, listen to music, get directions, and manage money through apps.

Smartphones changed social norms and continue to shape them. Even before smartphones took over, texting helped make short, constant communication feel normal. Once smartphones arrived with their full keyboards, many conversations moved away from phone calls and toward quick written messages. Later, the advent of emoji accelerated this trend. In-person social norms changed as well. Now, friends stop to look up information in the middle of their conversations. Drivers navigate with GPS instead of paper maps. Many workplaces have begun to expect their employees to answer emails and messages outside of normal business hours. Smartphones also changed how people act in public and how relationships work. For example, it became normal for friends to sit together while also scrolling on their own devices and common to take photos of meals, vacations, and events specifically to post them online.

While smartphones have opened a world of possibilities, they also raised concerns. Constant notifications can weaken attention. Screen time can disrupt sleep and damage in-person social relationships. Some people worry that life has become more performative, with both students and adults trying to document experiences instead of simply living them.

WEB 2.0 & SOCIAL MEDIA

The early internet was similar to a library, with thousands of webpages that people could log on and read. But like a library, where only a few authors write books compared to the many readers, the internet had few authors compared to its many users. Then suddenly the internet shifted to a place where millions of users created information. This change transformed the internet into something more social, more interactive, and more influential in daily life. The shift to interactivity was nicknamed Web 2.0. In Web 2.0, users no longer just read what’s online. They post their own photos, videos, and ideas.

Knowing a few specific examples helps us see this shift over time, because different platforms made different parts of Web 2.0 possible.

In the late 1990s and early 2000s, people began using chat rooms, forums, and comment sections on web pages. Soon after, early social media sites made it possible to create online profiles and share life updates. MySpace launched in 2003 and became one of the first widely used social media sites. Facebook, launched in 2004, expanded quickly and eventually became the dominant social network. Over time social media diversified to include video and then variations on the way people shared their lives. YouTube (2005), Twitter/X (2006), Instagram (2010), Snapchat (2011), and TikTok (2017 in the US) all helped create an internet that was increasingly centered on sharing, not just on reading.

Smartphones mattered here. Once phones had cameras and high-speed connections, people could upload photos and videos instantly. Social media became a constant part of daily life, not something you did only on a home computer.

Primary Source: Screenshot

Primary Source: Screenshot

A sample of the social media options available in 2000.

Social media has helped people find communities and spread ideas quickly, and it continues to shape how Americans communicate and organize. It has played a role in political movements and protests by helping organizers share information, raise money, and attract attention from the media. One dramatic example outside the United States was the Arab Spring of 2011. In countries such as Tunisia and Egypt, protesters used social media to spread videos, share information about demonstrations, and draw international attention. In Egypt especially, Facebook and Twitter/X were widely used to promote protests and share updates. Social media did not cause these uprisings by itself, but it helped people coordinate faster than older methods. Over time, however, authoritarian governments adapted. Some governments learned to monitor social media, spread their own propaganda, and use digital tools for surveillance. A domestic example of social media’s political impact was the rise of #BlackLivesMatter (2013–2015). After the hashtag began spreading online, activists used platforms like Twitter/X, Facebook, and Instagram to share videos, organize protests, and push the issue of police violence and racial justice into national news.

A new kind of celebrity also emerged with the growth of social media: influencers. Influencers build large audiences and earn money through sponsorships, advertising, and product promotion. In some cases, top influencers can earn millions of dollars through brand partnerships. In one way, they are much like the celebrities of old who appear in advertisements on television or in magazines, but in many ways the influencers of social media are an entirely new sort of celebrity.

Smartphones and the rise of social media are a good way to understand another shift if the history of the internet: the growth of what some historians call the platform era. In the early web, the internet was mostly pages and links that you visited through a browser. With smartphones, apps, and centralized networks, information is now centralized in databases and displayed on customized platforms, sometimes as webpages on a computer, but just as often in an app or on a smartwatch. And that centralization of information, and the way that it is sliced, diced, and fed to us, is key to understanding our next topic: the dangers of the Information Age.

DANGERS & CHALLENGES OF THE INFORMATION AGE

The internet has created real benefits, but it has also created new dangers. Over time, each wave of development, from the early web, to broadband, to smartphones, to social media, and now AI has made online life faster and more powerful. But each wave has also increased the scale of harm, because information can spread more quickly, reach more people, last longer, and be monetized more aggressively. The biggest risks today include cybercrime, privacy loss, misinformation, the digital divide, and the growing power of a few companies that control large parts of online life.

One reason these risks matter is that the internet is not “the cloud” in a magical sense. It depends on real infrastructure and on decisions about who owns and controls it. Most global internet traffic travels through undersea fiber-optic cables, and much of what we do online relies on huge data centers and cloud computing services that store and deliver videos, apps, and websites quickly. When these systems are controlled by a small number of companies, questions about competition, security, and political power become harder to ignore.

New technology brings new risks. In 1999, the Y2K Scare swept the world. To save memory, many early computer programs used only two digits for the year. That meant “1999” was stored as “99.” When 2000 arrived and the date became “00,” would computers misread the year as 1900 and cause failures in banking, power systems, transportation, and government? The disaster did not happen, partly because companies and governments spent billions fixing their code, but the scare reminded people how dependent modern life had become on computers.

Other threats have turned out to be more serious.

One is hacking, when someone breaks into a computer system to steal information or take control. One major example was the Equifax breach (2017), in which hackers accessed sensitive personal data connected to millions of Americans. The breach showed how identity theft can become easier when large companies fail to protect user data.

Another danger is ransomware, which can lock important files and shut down services. A major example was the Colonial Pipeline ransomware attack (2021), in which hackers disrupted an oil pipeline by targeting the computer systems the company relied on. But cybercrime is not always a dramatic national headline. Routine phishing scams target ordinary people every day, and schools and hospitals have increasingly been hit by ransomware that disrupts learning and patient care.

Primary Source: Screenshot

Primary Source: Screenshot

A screenshot of the WannaCry ransomware attack that spread through Microsoft Windows computers in 2017. The malicious app encrypts data until users paid a ransom in bitcoin.

Countries can also engage in cyberwar, using hacking to weaken rivals by targeting infrastructure, military systems, or elections. For example, Russia has been linked to cyberattacks on Ukrainian infrastructure, including attacks that shut down parts of the electrical power grid.

The digital divide is another major issue. Not everyone has fast internet, modern devices, or strong digital skills, and that gap can deepen inequality. In the Information Age, many people have argued that access to high-speed internet should be treated like access to electricity: a basic necessity for full participation in society. In many ways, the digital divide today echoes older challenges in American history, such as in the 1930s and 1940s when the New Deal’s Rural Electrification Administration worked to bring electricity to rural areas.

Another major concern is data and privacy. Companies and governments can collect huge amounts of information about people’s behavior, purchases, and locations. Some of this is obvious, like targeted advertising. But much of it is routine and invisible. Websites and apps often rely on cross-site tracking, and a large industry of data brokers buys and sells information such as location histories, shopping habits, and demographic guesses. Over time, this creates a kind of digital “permanence,” where old posts, photos, and searches can shape someone’s reputation long after the moment has passed.

A major example of privacy concerns involving private companies was the Cambridge Analytica scandal, which showed how data connected to millions of Facebook users could be gathered and used for political targeting without clear user consent.

Businesses aren’t the only ones collecting data. After the September 11 attacks, Congress passed the USA PATRIOT Act, which expanded the government’s power to collect information in the name of preventing terrorism. In 2013, contractor Edward Snowden revealed classified information about government surveillance programs, and Americans were shocked to learn how much data their government could collect, even on people who were not suspected of any crime. Those revelations reignited debates about privacy and security that continue today.

Another major political fight has been about regulation over net neutrality. Net neutrality is the idea that internet service providers (ISPs) should treat all websites and online services the same, instead of slowing down certain content or charging extra for “fast lanes.” Supporters argue that without net neutrality, a few powerful companies could shape what people can access online by favoring some sites over others. They also argue that equal treatment protects competition, because small start-ups and independent creators can reach people without having to pay extra. Opponents argue that heavy regulation could discourage investment in broadband networks and make it harder for companies to improve service.

The debate has swung back and forth. In 2015, the FCC adopted net neutrality rules, but in 2017 those rules were repealed under the Trump administration. Since then, the issue has remained controversial because it raises a practical fairness question: If huge companies like Amazon or Netflix can pay internet providers for priority delivery of their content, why shouldn’t they be allowed to, and what happens to smaller sites that cannot pay for the same advantage?

And often, money is at the heart of the challenges of the Information Age. The internet is shaped by how many companies make money. For websites and social media companies, online advertising is the dominant business model. Ads are often sold through automated ad auctions, and the system rewards detailed tracking and personalization. As a result, many platforms have strong incentives to keep users scrolling and clicking.

This connects to another major shift: the rise of the attention economy. Many social media apps use recommendation systems and algorithmic feeds to decide what people see next. Features like infinite scroll and constant notifications are designed to keep users engaged, sometimes by pushing content that is emotional, extreme, or addictive. This can lead people into “rabbit holes” of increasingly intense content, and belief in conspiracy theories and disbelief in authority are both on the rise.

The internet has also changed how people get news. Instead of most Americans relying on a few TV channels and local newspapers, the media environment has become more fragmented, with stories spreading through social media, podcasts, influencers, and algorithm-driven feeds. As advertising moved online, many local newspapers lost revenue, contributing to the decline of local journalism. At the same time, news aggregators and social platforms often spread the same story across multiple sites, sometimes stripping away context and making it harder to know where a story originated and if it is trustworthy.

Social media’s rise has led to new challenges around free speech and digital trust. In the past, it was harder for false information to spread widely unless a newspaper, TV station, or radio host repeated it. Now, anyone can post almost anything, and a viral post can reach millions of people within hours. One concrete example of misinformation and manipulation came during the 2016 election, when Russia conducted online disinformation campaigns designed to inflame conflict and influence American voters.

Misinformation can also become a public health and everyday life issue. During the COVID-19 pandemic, false claims about vaccines, masks, and “cures” spread widely online, sometimes leading people to make dangerous health choices and making it harder for communities to agree on basic facts.

So, should social media companies be responsible for limiting misinformation the way newspaper editors or book publishers do? Some people argue that social media companies should behave like neutral platforms, similar to phone companies that provide the lines but do not control what people say. Others argue that social media companies are more like publishers, because they use algorithms to promote some content over other content and therefore shape what ideas spread. This debate is closely tied to Section 230 of the Communications Decency Act (1996), a law that generally protects online platforms from legal responsibility for what their users post. The future of that law, and how responsible social media platforms are for what spreads on their sites, is still an open question.

Social media has also been linked to mental health concerns. Although much of the attention has focused on teenagers, who may be especially vulnerable to pressure about how they look or act, these pressures can affect anyone. Many people experience fear of missing out, compare their lives to the carefully curated versions they see online, or fall into the trap of treating what they see on social media as completely real.

Primary Source: Screenshot

Primary Source: Screenshot

In 2026, New Jersey became one of many states to pass laws trying to restrict students’ use of cell phones at school.

These debates are not just theoretical. They shape everyday choices in schools, workplaces, and families, especially around smartphones and whether they should be allowed in classrooms. Many schools and states have debated rules about smartphones in classrooms, and those debates are still ongoing. Supporters of stricter rules argue that phones make it harder for students to focus, increase cheating, and pull attention away from in-person relationships. They note that constant notifications make it harder to read, write, and think deeply for long periods of time. Opponents of strict bans argue that phones can be useful tools for learning, translation, research, and communication, and that students and families may rely on them for safety, scheduling, or after-school jobs.

But even when schools agree on the goal, enforcing a smartphone policy can be a practical nightmare, consuming time and energy. Teachers end up spending precious minutes arguing with and scolding students instead of focusing on instruction and learning. The debate also raises questions about fairness. If phones are allowed, students with newer devices, unlimited data, and constant social media access may have a very different classroom experience than students without those advantages. If phones are banned, students who rely on phones for translation, accessibility supports, or family responsibilities may be affected more than others. So, policy ideas that sound as simple as “no phones during school hours” quickly become a bigger argument about what is realistic and what is fair.

One final, and quite new challenge has arisen with the advent of artificial intelligence (AI). When ChatGPT was released in 2022, it was an earthquake in the tech world, and companies rushed to integrate AI into their products and release their own AI tools. Certainly, AI has the potential to be enormously useful. It can help people write, translate, tutor, brainstorm, and automate routine work. But it can also be used to create convincing fake images, audio, and video that look and sound real. This raises difficult questions for the future. Is it acceptable for someone to create fake images or videos of you, or mimic your voice, even as a joke? Who owns your likeness, and what rights should people have to protect it? And the problem is bigger than just embarrassment or harassment. If fake evidence can be produced quickly and spread instantly, then it becomes harder for the public to agree on basic facts. People may start to doubt real footage by calling it fake, or believe fake footage because it “feels” true. So the challenge of AI is not only about new technology. It is about trust. How can we tell what is real and what is fake? If people cannot agree on what is true, can democracy function the same way?

Taken together, the challenges we’ve described in this section show a pattern: as the internet became more powerful and more central to daily life, we’ve gained new opportunities, but we also face new risks that the generations before us never had to grapple with.

THE WORLD GOES ONLINE DURING COVID

Nothing made the promises and perils of the Information Age as clear as the COVID-19 pandemic. In 2020, Americans of all ages were pushed deeper into the digital world.

During lockdowns, people who had rarely used the internet before suddenly depended on it. Work, school, medicine, and even religious services moved online. Schools switched to virtual education, and many students used online classrooms, uploaded assignments, and attended meetings on video for the first time. People who had only used a phone for voice calls, especially older adults, suddenly faced the prospect of being cut off from the outside world.

This shift created real opportunities, but it also revealed major problems. Some students lacked reliable devices or internet access, and many struggled with motivation, isolation, and mental health. In many communities, the pandemic turned internet access from a convenience into a requirement for school and daily life. The pandemic also highlighted pre-existing inequalities in access to technology, making the digital divide even more visible. Schools still had a responsibility to teach all students, but were they now responsible for making sure every student had internet access and a device?

Primary Source: Screenshot

Primary Source: Screenshot

The “I am not a Cat” viral video of a lawyer who couldn’t figure out who to turn off his cat filter on Zoom during an online court session was a humorous example of people trying to adjust to working entirely online during the pandemic.

For many Americans, platforms like Zoom and FaceTime became part of daily life for school, work, and family. Many jobs moved to remote work, and some workers never returned to the office. Hybrid work remains common in many industries. Old assumptions about commuting and office space began to be challenged, raising new questions about the future of cities, suburbs, and downtown business districts.

Healthcare changed too. Telehealth expanded rapidly and remains an important part of healthcare in many communities. Patients can now speak with doctors online, including for mental health services.

CONCLUSION

The internet has reshaped the economy, entertainment, and communication. It has opened doors for learning, creativity, and connection, but it has also created new problems: privacy risks, misinformation, cybercrime, and growing stress around screen time.

Like the railroad in the 1800s or television in the 1950s, the internet has reshaped how Americans live. But unlike those earlier technologies, it allows individuals to publish and influence millions instantly.

So, when historians look back on the past 30 years, they may say the rise of smartphones and the internet was one of the biggest forces shaping American life. They may also argue about whether its benefits outweighed its costs.

What do you think? Has the internet made America a better place?

CONTINUE READING

SUMMARY

Big Idea: The internet has transformed American life by making information, communication, and commerce faster and cheaper, but it has also created new challenges around privacy, inequality, misinformation, and power.

The internet began as a government-funded Cold War project and coincided with the dawn of the Information Age, with the economy shifting away from factories and toward data, communication, and knowledge. Personal computers and the World Wide Web brought digital technology into homes, schools, and offices.

The internet revolutionized the economy and entertainment. It changed how Americans buy and sell as online sales pushed brick-and-mortar retailers out of business. At the same time, the internet lowered barriers for small entrepreneurs using sites like eBay and online payment tools. It also made gaming, streaming, and on-demand entertainment normal, as power shifted away from traditional TV and video rental stores and toward online platforms and independent creators.

Smartphones put the internet in people’s pockets. Americans began relying on apps for daily life, changing social norms and raising new concerns about attention, sleep, and in-person relationships.

The internet shifted from mostly reading pages to creating and sharing content. Social media helped people build communities and organize, but it also concentrated influence in platforms and feeds that shape what people see.

As devices and networks became more capable, online life became more integrated into everyday life. The COVID-19 pandemic accelerated the integration of digital tools even further and showed both the benefits of the internet and the costs of unequal access, as the digital divide became a major obstacle for many families and communities. Cybercrime, privacy concerns, government surveillance, net neutrality debates, and misinformation all raise questions about who controls the internet and how society can protect trust and democracy.

VOCABULARY

![]()

PEOPLE AND GROUPS

Steve Jobs: Apple leader who helped launch the iPhone in 2007, accelerating the shift to smartphones as everyday internet devices.

Larry Page & Sergey Brin: Co-founded Google (1998), which popularized a more effective way to search the growing web.

Influencer: An online personality who builds a large audience on social media and earns money through advertising, sponsorships, or product promotion.

![]()

KEY IDEAS

Hacking: Breaking into computer systems, often to steal information, disrupt services, or take control.

Identity Theft: Stealing personal information to commit fraud, such as opening accounts in someone else’s name.

Ransomware: Malware that locks files or systems until a ransom is paid.

Phishing: A scam that tricks people into revealing passwords or personal information, often through fake emails or messages.

Cyberwar: Conflict between countries that involves hacking to disrupt infrastructure or weaken rivals.

Digital Divide: The gap between people who have reliable internet access, devices, and digital skills

Cross-Site Tracking: A method that allows companies to record user activity across websites and apps and compile profiles of what users buy, read, watch, etc.

Government Surveillance: Government monitoring or collection of people’s communications or data, often justified for security.

Net Neutrality: The principle that internet service providers (companies that provide internet access) should treat all online traffic equally and not favor certain websites or services.

Telehealth: Healthcare services delivered through the internet (such as video appointments), allowing patients to consult with doctors remotely.

![]()

EVENTS

Information Age: A modern era, beginning in the late 1900s in which economic and political power increasingly comes from data, communication, and knowledge.

Dot-Com Bubble: Rapid growth of internet-based companies and investment, followed by major market losses.

Y2K Scare: Late-1990s fear that computers would malfunction in 2000 because many programs stored years using only two digits.

Web 2.0: A phase of the internet when users increasingly created and shared content, not just read it.

Platform Era: A later phase of the internet when information is no longer written out into static webpages but is stored in databases and formatted into apps, feeds, and webpages that people interact with.

COVID-19 Pandemic: Public health crisis that pushed schools, work, healthcare, and services further online.

![]()

BUSINESS

Apple: Technology company that popularized the personal computer with the Mac and smartphones with the iPhone.

America Online (AOL): Company that helped many Americans get online in the 1990s through dial-up internet and an easy-to-use online service.

Google: Company that started as a search engine in the late 1990s and then expanded into many other internet products and services.

E-commerce: Online buying and selling (online shopping).

Business-to-Business (B2B): Online sales and services between companies (not directly to individual consumers).

eBay: Online marketplace that helped popularize person-to-person selling.

Gig Economy: Work built around short-term jobs, often found through apps and online platforms like Uber or DoorDash.

Netflix: Streaming platform that helped normalize on-demand TV and movie watching.

YouTube: Video platform where people can post videos and build audiences.

Facebook: Social media platform launched in 2004 that became a dominant network for online communication.

Zoom: Video meeting platform widely used for school, work, and communication.

Data Brokers: Companies that buy and sell personal data, often collected from many sources.

Attention Economy: A system in which digital platforms compete for user attention in order to sell advertising, often using algorithms and design features to keep people engaged as long as possible.

Remote Work: Working from home (or outside a traditional workplace) using the internet for communication, meetings, and job tasks.

Hybrid Work: A work arrangement that combines remote work with in-person work, such as coming into an office on some days but not others.

![]()

TECHNOLOGY

Internet: A global “network of networks” that connects computers and allows information to move between them.

ARPANET: An early computer network that connected universities and research centers and helped lay the groundwork for today’s internet. It was funded in part by the government because of Cold War fears.

Personal Computer: Smaller, affordable computer designed for home and small business use. These became common in the late 1970s and 1980s.

Macintosh: Apple’s 1984 personal computer that helped popularize a graphical user interface for everyday users.

Graphical User Interface (GUI): A computer interface that uses windows, icons, and menus instead of typed commands. This design made computers much easier for the average person to use.

World Wide Web (www): A system of web pages and hyperlinks that runs on top of the internet and is accessed through a browser.

Web Browser: Software that lets users view and navigate web pages. Examples include Chrome and Safari.

Domain Name System (DNS): The system that translates website names into the number-based addresses computers use.

Dial-Up: An early form of home internet connection that used telephone lines, which was slow and tied up the phone line while connected.

Broadband: A faster, always-on internet connection that replaced dial-up and made it easier to stream, shop online, and stay connected throughout the day.

Search Engine: A tool that helps users find information online by searching the web for keywords. Google became the dominant option by the early 2000s.

Streaming: Watching or listening to media online without downloading the entire file first.

Cell Phone: Mobile phone that connects through networks of towers (“cells”).

Cell Network: System of cell towers that allow mobile phones to communicate wirelessly.

iPhone: Apple’s smartphone (first released in 2007) that popularized the modern touchscreen smartphone and helped expand the app economy.

Smartphone: Cell phone that functions like a small computer with internet access and apps.

Social Media: Websites and apps where users create profiles, share content, and interact with others. Facebook, Instagram and Snapchat are examples.

Data Centers: Large warehouses filled with servers that store and process online information.

Cloud Computing: Using remote servers (often in data centers) to store, run, and deliver apps and data over the internet.

Artificial Intelligence (AI): Computer systems that can imitate tasks associated with human intelligence, such as generating text, images, or audio.